Uncertainty about facts can be reported without damaging public trust in news – study

A series of experiments – including one on the BBC News website –finds the use of numerical ranges in news reports helps us grasp the uncertainty of stats while maintaining trust in data and its sources.

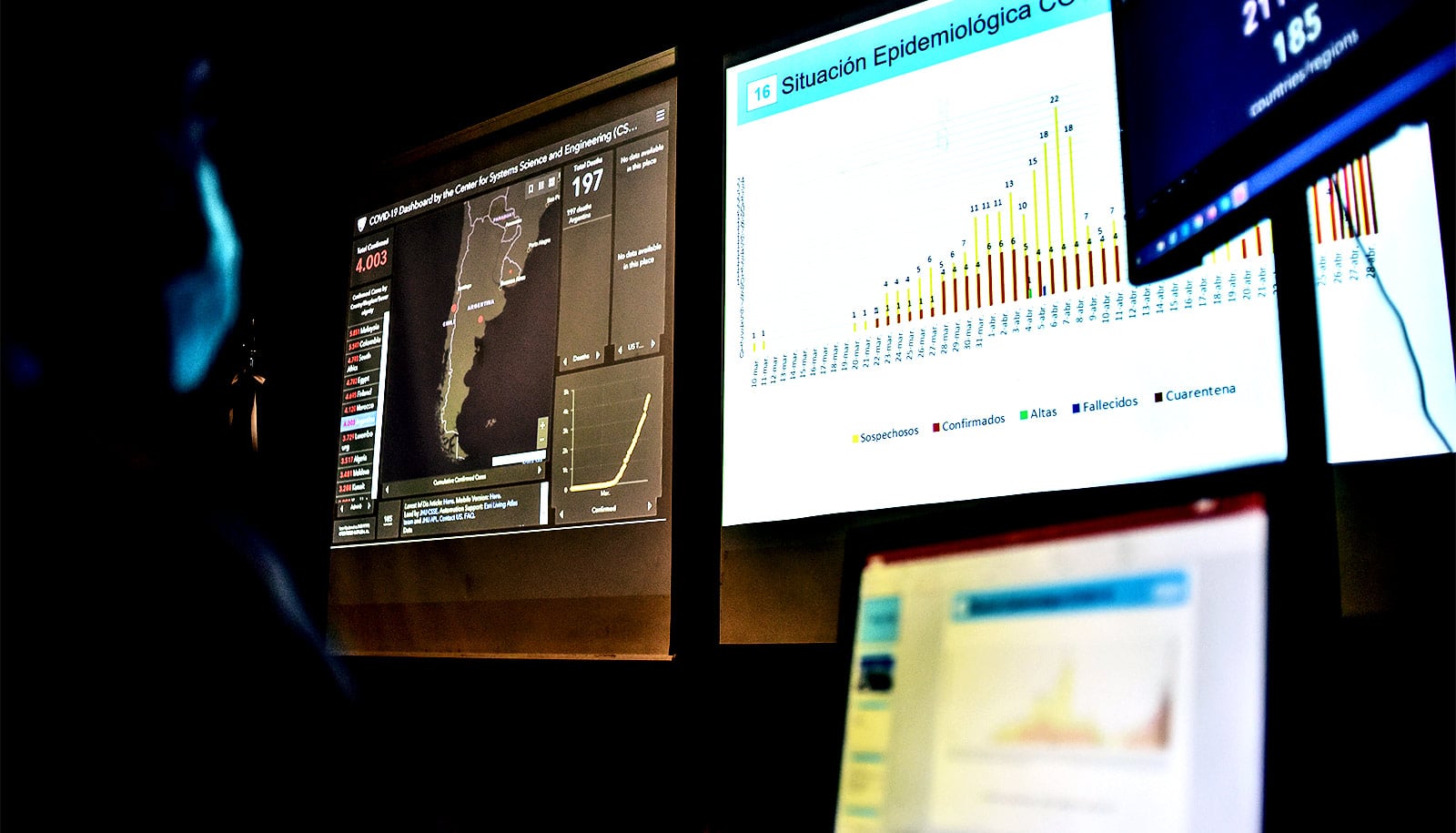

The numbers that drive headlines – those on Covid-19 infections, for example – contain significant levels of uncertainty: assumptions, limitations, extrapolations, and so on.

Experts and journalists have long assumed that revealing the “noise” inherent in data confuses audiences and undermines trust, say University of Cambridge researchers, despite this being little studied.

Now, new research has found that uncertainty around key facts and figures can be communicated in a way that maintains public trust in information and its source, even on contentious issues such as immigration and climate change.

Researchers say they hope the work, funded by the Nuffield Foundation, will encourage scientists and media to be bolder in reporting statistical uncertainties.

“Estimated numbers with major uncertainties get reported as absolutes,” said Dr Anne Marthe van der Bles, who led the new study while at Cambridge’s Winton Centre for Risk and Evidence Communication.

“This can affect how the public views risk and human expertise, and it may produce negative sentiment if people end up feeling misled,” she said.

Co-author Sander van der Linden, director of the Cambridge Social Decision-Making Lab, said: “Increasing accuracy when reporting a number by including an indication of its uncertainty provides the public with better information. In an era of fake news that might help foster trust.”

The team of psychologists and mathematicians set out to see if they could get people much closer to the statistical “truth” in a news-style online report without denting perceived trustworthiness.

They conducted five experiments involving a total of 5,780 participants, including a unique field experiment hosted by BBC News online, which displayed the uncertainty around a headline figure in different ways.

The researchers got the best results when a figure was flagged as an estimate, and accompanied by the numerical range from which it had been derived, for example: “…the unemployment rate rose to an estimated 3.9% (between 3.7%–4.1%)”.

This format saw a marked increase in the feeling and understanding that the data held uncertainty, but little to no negative effect on levels of trust in the data itself, those who provided it (e.g. civil servants) or those reporting it (e.g. journalists).

“We hope these results help to reassure all communicators of facts and science that they can be more open and transparent about the limits of human knowledge,” said co-author Prof Sir David Spiegelhalter, Chair of the Winton Centre at the University of Cambridge.

Catherine Dennison, Welfare Programme Head at the Nuffield Foundation, said: “We are committed to building trust in evidence at a time when it is frequently called into question. This study provides helpful guidance on ensuring informative statistics are credibly communicated to the public.”

The findings are published today in the journal Proceedings of the National Academy of Sciences.

Most experiment participants were recruited through the online crowdsourcing platform Prolific. They were given short, news-style texts on one of four topics: UK unemployment, UK immigration, Indian tiger populations, or climate change. Uncertainty was presented as a single added word (e.g. ‘estimated’), a numerical range, a longer verbal caveat – “there is uncertainty around this figure: it could be somewhat higher or lower” – or combination of these, as well as the ‘control’ of a standalone figure without uncertainty, typical of most news reporting. They found that the added word did not register with people, and the longer caveat registered but significantly diminished trust – the researchers believe it was too ambiguous. Presenting the numerical range (from minimum to maximum) had the right balance of signaling uncertainty with little evidence for loss of trust.

Prior views on contested topics within news reports, such as migration, were included in the analysis. Although attitudes towards the issue mattered for how facts were viewed, when openness about data uncertainty was added it did not substantially reduce trust in either the numbers or the source.

The team worked with the BBC to conduct a field experiment in October 2019, when figures were released about the UK labour market.

In the BBC’s online story, figures were either presented as usual, a ‘control’, or with some uncertainty – a verbal caveat or a numerical range – and a link to a brief survey. Findings from this “real world” experiment matched those from the study’s other “lab conditions” experiments.

“We recommend that journalists and those producing data give people the fuller picture,” said co-author Dr Alexandra Freeman, Executive Director of the Winton Centre.

“If a number is an estimate, let them know how precise that estimate is by putting a minimum and maximum in brackets afterwards.”

Sander van der Linden added: “Ultimately we’d like to see the cultivation of psychological comfort around the fact that knowledge and data always contain uncertainty.”

“Disinformation often appears definitive, and fake news plays on a sense of certainty,” he said.

“One way to help people navigate today’s post-truth news environment is by being honest about what we don’t know, such as the exact number of confirmed coronavirus cases in the UK. Our work suggests people can handle the truth.”

Last month, David Spiegelhalter launched a podcast about statistics, ‘Risky Talk’. In the first episode he discusses communicating climate change data with Sander van der Linden and Dr Emily Shuckburgh, leader of the University’s new climate initiative Cambridge Zero.